Amazon Simple Storage Service

Amazon Simple Storage Service is storage for the Internet. It is designed to make web-scale computing easier for developers.

Amazon S3 has a simple web services interface that you can use to store and retrieve any amount of data, at any time, from anywhere on the web. It gives any developer access to the same highly scalable, reliable, fast, inexpensive data storage infrastructure that Amazon uses to run its own global network of web sites. The service aims to maximize benefits of scale and to pass those benefits on to developers.

To use the AWS SDK for Java, you must have:

2. After Successfully Installation, You will see new Icon for AWS Toolkit as shown below in Eclipse menu bar.

3. Create AWS Java Project and select appropriate checkbox’s for Amazon Services which are needed in project.

4. Go to the AWSToolkit > Preferences and Fill up Credentials details.

It enables you to manage access to AWS services and resources securely. Using IAM, you can create and manage AWS users and groups, and use permissions to allow and deny their access to AWS resources.

AWS has a list of best practices to help IT professionals and developers manage access to AWS resources.

Users – Create individual users.

Groups – Manage permissions with groups.

Permissions – Grant least privilege.

Auditing – Turn on AWS CloudTrail.

Password – Configure a strong password policy.

MFA – Enable MFA for privileged users.

Roles – Use IAM roles for Amazon EC2 instances.

Sharing – Use IAM roles to share access.

Rotate – Rotate security credentials regularly.

Conditions – Restrict privileged access further with conditions.

Root – Reduce or remove use of root.

Steps for Create IAM User

1. Go to AWS Console and click on IAM

2. Create group and assign AmazonS3FullAccess Policy

3. Create user and assign to group and click

CONSOLE OUPUT

===========================================

Getting Started with Amazon S3

===========================================

Creating bucket my-first-s3-bucket-00802f76-777d-41ea-b236-650286137f26

Listing buckets

- my-first-s3-bucket-00802f76-777d-41ea-b236-650286137f26

- myshribucket

- shribucket123

Uploading a new object to S3 from a file

Downloading an object

Content-Type: text/plain

abcdefghijklmnopqrstuvwxyz

01234567890112345678901234

!@#$%^&*()-=[]{};':',.<>/?

01234567890112345678901234

abcdefghijklmnopqrstuvwxyz

Listing objects

- MyObjectKey (size = 135)

Deleting an object

Deleting bucket my-first-s3-bucket-00802f76-777d-41ea-b236-650286137f26

Steps for Install AWS CLI

A: Buckets Operations & Commands

B: Objects operations &commands

Refernce: https://aws.amazon.com/getting-started/

Amazon S3 has a simple web services interface that you can use to store and retrieve any amount of data, at any time, from anywhere on the web. It gives any developer access to the same highly scalable, reliable, fast, inexpensive data storage infrastructure that Amazon uses to run its own global network of web sites. The service aims to maximize benefits of scale and to pass those benefits on to developers.

Installation & Setup

Prerequisites

To use the AWS SDK for Java, you must have:

- AWS Free tier account click here and register .This enables you to gain free, hands-on experience with the AWS platform, products, and services.

- a suitable Java Development Environment.

- An AWS account and access keys. For instructions, see Sign Up for AWS and Create an IAM User.

- AWS credentials (access keys) set in your environment or using the shared (by the AWS CLI and other SDKs) credentials file. For more information, see Set up AWS Credentials and Region for Development.

Steps

A> Install Maven

- Visit Maven official website, download the Maven zip file,

- Add MAVEN_HOME variables in the Windows environment, and point it to your Maven folder.

- Update PATH variable, append Maven bin folder – %M2_HOME%\bin, so that you can run the Maven’s command everywhere.

- Done, to verify it, run mvn –version in the command prompt.

B> AWS Toolkit

Prerequisites

- An Amazon Web Services account– To obtain an AWS account, go to the AWS home page and click Sign Up Now. Signing up will enable you to use all of the services offered by AWS.

- A supported operating system– The AWS Toolkit for Eclipse is supported on Windows, Linux, macOS, or Unix.

- Java 1.8 or later

- Eclipse IDE for Java Developers 4.2 or later– We attempt to keep the AWS Toolkit for Eclipse current with the default version available on the Eclipse download page

Steps

2. After Successfully Installation, You will see new Icon for AWS Toolkit as shown below in Eclipse menu bar.

3. Create AWS Java Project and select appropriate checkbox’s for Amazon Services which are needed in project.

4. Go to the AWSToolkit > Preferences and Fill up Credentials details.

Getting Started

AWS IAM (Identity and Access Management ) :-

AWS has a list of best practices to help IT professionals and developers manage access to AWS resources.

Users – Create individual users.

Groups – Manage permissions with groups.

Permissions – Grant least privilege.

Auditing – Turn on AWS CloudTrail.

Password – Configure a strong password policy.

MFA – Enable MFA for privileged users.

Roles – Use IAM roles for Amazon EC2 instances.

Sharing – Use IAM roles to share access.

Rotate – Rotate security credentials regularly.

Conditions – Restrict privileged access further with conditions.

Root – Reduce or remove use of root.

Steps for Create IAM User

1. Go to AWS Console and click on IAM

2. Create group and assign AmazonS3FullAccess Policy

3. Create user and assign to group and click

Sample Code for Amazon S3

package com.amazonaws.samples;

/*

* Copyright 2010-2017 Amazon.com, Inc. or its affiliates. All Rights Reserved.

*/

import java.io.BufferedReader;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.io.InputStream;

import java.io.InputStreamReader;

import java.io.OutputStreamWriter;

import java.io.Writer;

import java.util.UUID;

import com.amazonaws.AmazonClientException;

import com.amazonaws.AmazonServiceException;

import com.amazonaws.auth.AWSCredentials;

import com.amazonaws.auth.AWSStaticCredentialsProvider;

import com.amazonaws.auth.profile.ProfileCredentialsProvider;

import com.amazonaws.regions.Region;

import com.amazonaws.regions.Regions;

import com.amazonaws.services.s3.AmazonS3;

import com.amazonaws.services.s3.AmazonS3Client;

import com.amazonaws.services.s3.AmazonS3ClientBuilder;

import com.amazonaws.services.s3.model.Bucket;

import com.amazonaws.services.s3.model.GetObjectRequest;

import com.amazonaws.services.s3.model.ListObjectsRequest;

import com.amazonaws.services.s3.model.ObjectListing;

import com.amazonaws.services.s3.model.PutObjectRequest;

import com.amazonaws.services.s3.model.S3Object;

import com.amazonaws.services.s3.model.S3ObjectSummary;

public class S3Sample {

public static void main(String[] args) throws IOException {

AWSCredentials credentials = null;

try {

credentials = new ProfileCredentialsProvider("default").getCredentials();

} catch (Exception e) {

throw new AmazonClientException(

"Cannot load the credentials from the credential profiles file. " +

"Please make sure that your credentials file is at the correct " +

"location (C:\\Users\\Shrikant_Jagtap\\.aws\\credentials), and is in valid format.",

e);

}

AmazonS3 s3 = AmazonS3ClientBuilder.standard()

.withCredentials(new AWSStaticCredentialsProvider(credentials))

.withRegion("us-west-2")

.build();

String bucketName = "my-first-s3-bucket-" + UUID.randomUUID();

String key = "MyObjectKey";

System.out.println("===========================================");

System.out.println("Getting Started with Amazon S3");

System.out.println("===========================================\n");

try {

System.out.println("Creating bucket " + bucketName + "\n");

s3.createBucket(bucketName);

System.out.println("Listing buckets");

for (Bucket bucket : s3.listBuckets()) {

System.out.println(" - " + bucket.getName());

}

System.out.println();

System.out.println("Uploading a new object to S3 from a file\n");

s3.putObject(new PutObjectRequest(bucketName, key, createSampleFile()));

System.out.println("Downloading an object");

S3Object object = s3.getObject(new GetObjectRequest(bucketName, key));

System.out.println("Content-Type: " + object.getObjectMetadata().getContentType());

displayTextInputStream(object.getObjectContent());

System.out.println("Listing objects");

ObjectListing objectListing = s3.listObjects(new ListObjectsRequest()

.withBucketName(bucketName)

.withPrefix("My"));

for (S3ObjectSummary objectSummary : objectListing.getObjectSummaries()) {

System.out.println(" - " + objectSummary.getKey() + " " +

"(size = " + objectSummary.getSize() + ")");

}

System.out.println();

System.out.println("Deleting an object\n");

s3.deleteObject(bucketName, key);

System.out.println("Deleting bucket " + bucketName + "\n");

s3.deleteBucket(bucketName);

} catch (AmazonServiceException ase) {

System.out.println("Caught an AmazonServiceException, which means your request made it "

+ "to Amazon S3, but was rejected with an error response for some reason.");

System.out.println("Error Message: " + ase.getMessage());

System.out.println("HTTP Status Code: " + ase.getStatusCode());

System.out.println("AWS Error Code: " + ase.getErrorCode());

System.out.println("Error Type: " + ase.getErrorType());

System.out.println("Request ID: " + ase.getRequestId());

} catch (AmazonClientException ace) {

System.out.println("Caught an AmazonClientException, which means the client encountered "

+ "a serious internal problem while trying to communicate with S3, "

+ "such as not being able to access the network.");

System.out.println("Error Message: " + ace.getMessage());

}

}

private static File createSampleFile() throws IOException {

File file = File.createTempFile("aws-java-sdk-", ".txt");

file.deleteOnExit();

Writer writer = new OutputStreamWriter(new FileOutputStream(file));

writer.write("abcdefghijklmnopqrstuvwxyz\n");

writer.write("01234567890112345678901234\n");

writer.write("!@#$%^&*()-=[]{};':',.<>/?\n");

writer.write("01234567890112345678901234\n");

writer.write("abcdefghijklmnopqrstuvwxyz\n");

writer.close();

return file;

}

/**

* Displays the contents of the specified input stream as text.

*

* @param input

* The input stream to display as text.

*

* @throws IOException

*/

private static void displayTextInputStream(InputStream input) throws IOException {

BufferedReader reader = new BufferedReader(new InputStreamReader(input));

while (true) {

String line = reader.readLine();

if (line == null) break;

System.out.println(" " + line);

}

System.out.println();

}

}

CONSOLE OUPUT

===========================================

Getting Started with Amazon S3

===========================================

Creating bucket my-first-s3-bucket-00802f76-777d-41ea-b236-650286137f26

Listing buckets

- my-first-s3-bucket-00802f76-777d-41ea-b236-650286137f26

- myshribucket

- shribucket123

Uploading a new object to S3 from a file

Downloading an object

Content-Type: text/plain

abcdefghijklmnopqrstuvwxyz

01234567890112345678901234

!@#$%^&*()-=[]{};':',.<>/?

01234567890112345678901234

abcdefghijklmnopqrstuvwxyz

Listing objects

- MyObjectKey (size = 135)

Deleting an object

Deleting bucket my-first-s3-bucket-00802f76-777d-41ea-b236-650286137f26

The AWS Command Line Interface (CLI) is a unified tool to manage your AWS services. With just one tool to download and configure, you can control multiple AWS services from the command line and automate them through scripts.

Steps for Install AWS CLI

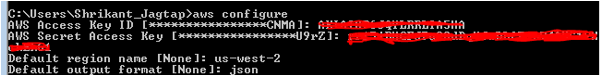

2. Check once everything has been setup correctly

Note

Here:

us-west-2) and the default output format (here: json). If you need different profiles, you can specify this additionally using the argument --profile:

aws configure --profile my-second-profile

A: Buckets Operations & Commands

High-level commands for the creation and deletion of buckets are provided by the aws-cli tool. Just provide the string

s3as first argument to aws followed by a shortcut for “make bucket”, “remove bucket”:

Ø Create New Bucket.

Ø Delete buckets that are already empty. Removing buckets with contents can be done by providing the option

--force

Ø To list the contents of a bucket

Ø List all available buckets:

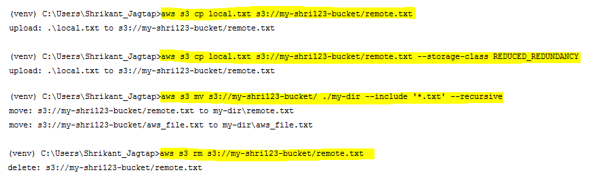

B: Objects operations &commands

The command line interface supports high-level operations for uploading, moving and removing objects.

Ø Uploading a local file to s3

Ø Specify the storage class for the new remote object:

Ø new file can be removed by using the command rm

Ø move complete sets of files from S3 to the local machine

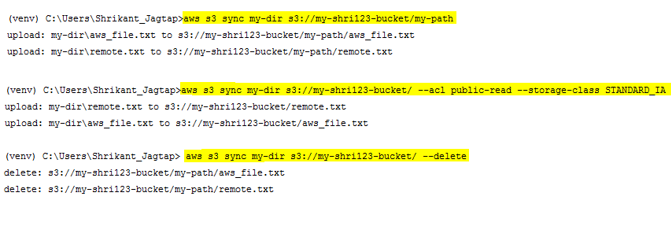

C: Synchronization operations &commands

Often it is useful to synchronize complete folder structures and their content either from the local machine to S3 or vice versa. Therefor the aws-cli tool comes with the handy option

sync

Ø updates all files in S3 that have a different size and/or modification timestamp than the one in the local directory. As it does not remove objects from the S3,

Ø you must specify the

--delete option to let the tool also remove files in S3 that are not present in your local copy

Ø When synchronizing your local copy with the remote files in S3 you can also specify the storage class and the access privilege’s

Refernce: https://aws.amazon.com/getting-started/

No comments:

Post a Comment